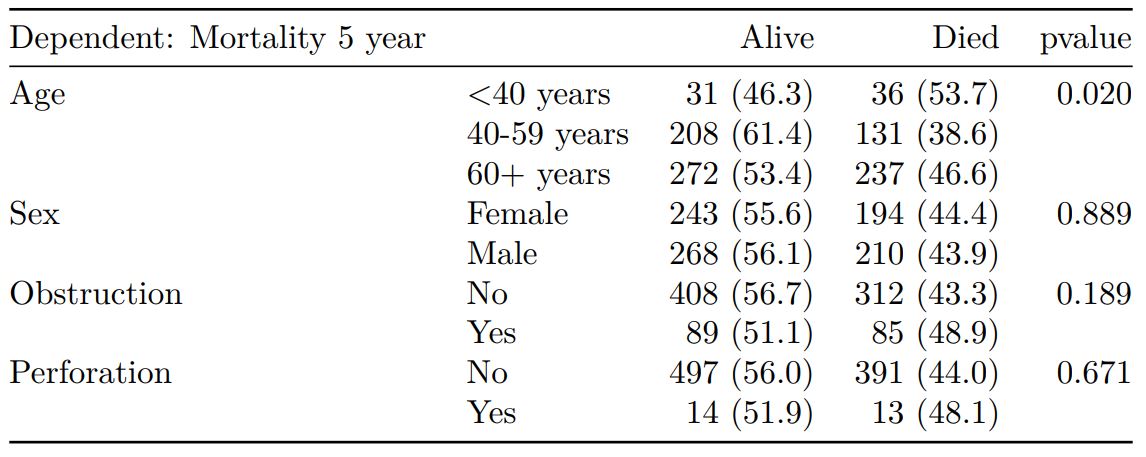

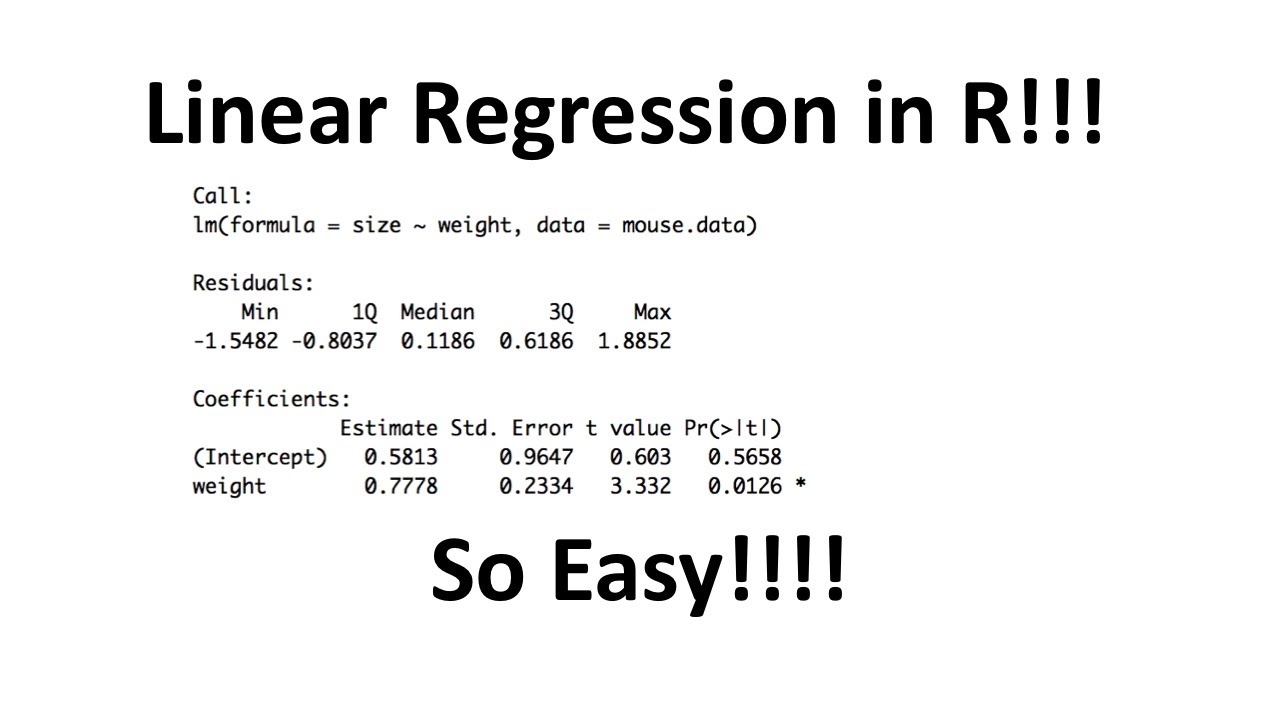

You can use similar syntax to access any of the values in the regression output. The p-values are shown for each regression coefficient in the model. Or we could use the following code to access the p-value for each of the regression coefficients: #view p-value for all variablesĠ.002175313 0.022315418 0.208600183 0.178471275 We can also access specific values in this output.įor example, we can use the following code to access the p-value for the points variable: #view p-value for points variable (After all, your coefficient is just an estimate of the true value based on your particular sample.) A sample size of 8 is indeed small, and it contributes to a large p p value. Rebounds 2.820224 1.6117911 1.749745 0.178471275 05 means that the true coefficient could easily be zero, or even have the opposite sign. To view the regression coefficients along with their standard errors, t-statistics, and p-values, we can use summary(model)$coefficients as follows: #view regression coefficients with standard errors, t-statistics, and p-values We can use these coefficients to write the following fitted regression equation: To view the regression coefficients only, we can use model$coefficients as follows: #view only regression coefficients of model Here the t-value of 8.923 and p-value of less than 2e-16 corresponds to the individual test of the hypothesis that 'the true coefficient for variable neck equals 0'. Residual standard error: 3.193 on 3 degrees of freedom The output provides a brief numerical summary of the residuals as well as a table of the estimated regression results. Suppose we fit the following multiple linear regression model in R: #create data frame Example: Extract Regression Coefficients from lm() in R The first model we fit is a regression of the outcome ( ) against all the other variables in the data set. The following example shows how to use these methods in practice.

Method 2: Extract Regression Coefficients with Standard Error, T-Statistic, & P-values summary(model)$coefficients Method 1: Extract Regression Coefficients Only model$coefficients #> /Library/Frameworks/R.framework/Versions/4.You can use the following methods to extract regression coefficients from the lm() function in R:

Hence, having all variables on the same scale will facilitate easy comparison of the “importance” of each variable, as now all variables are on the same scale. Similarly, measuring the distance walked in kilomweters or in millimeters will have an profound effect on the respective regression coefficient on, say, the amount of fat burned (in grams or in kilo grams…). For instance, measuring the “power” of a car in horse power or in kilowatt will strongly influence the value of the regression coefficient. It can be seen as undesirable that the scaling (SD) of the input variable determines (in part) the regression coefficient. The advantage of standardizing input variables is the simpler comparison of importance. This post shows how run a regression in R using standardized values as inputs (“standardized regression” for short, as some dup it). However, as is spelled out by eg., Gelman and Hill (2007), standardizing values is of advantages in many situations. Running a regression in R yields unstandardized coefficients, not standardized ones.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed